AI-Assisted Development: Why Human Oversight Still Defines Engineering Excellence

AI models that generate code have fundamentally shifted the bottleneck in software development. Writing code is no longer the hard part - AI tools deliver real productivity gains here. The real challenges now lie in understanding what to build, how to design it so it scales, and how to operate it safely on Day 2. A system that appears solid at launch but later collapses under load, exposes vulnerabilities, or becomes unmaintainable is not a coding failure - it’s a design failure.

At the same time, an increasing number of incidents highlight what happens when AI autonomy grows faster than human oversight. Recent outages at major tech companies caused by “Gen‑AI assisted changes” [3], and security vulnerabilities that can now be discovered and exploited at machine speed - as illustrated by a recent breach of a major firm's internal AI platform within two hours by an AI agent [9] - underscore a clear need for better practices and stronger governance. These concerns are echoed in the 2026 State of AI in Security & Development Report by Aikido, which surveyed 450 CISOs, developers, and AppSec engineers. According to the report, only 21% believe AI will ever write secure code without human oversight - a critical datapoint reinforcing the importance of disciplined review and architectural rigor.

Growing AI Autonomy - and the Oversight Gap

Anthropic’s analysis of user behavior [1] with Claude models shows a rising tendency toward auto‑approving model actions: around 20% for new users, climbing to 40% for highly experienced users with 750+ sessions. A surface‑level interpretation might suggest users become lax in oversight. Anthropic argues the opposite: as users gain experience, interruptions during inference also increase, indicating more - not less - attention.

Their findings also show that the more complex the task, the more frequently the model stops itself and requests clarification. The takeaway is clear: human oversight is indispensable, and higher‑risk use cases especially require additional guardrails such as approval workflows and usage‑pattern monitoring to ensure safety [1].

Disempowerment Risks in Real‑World LLM Usage

In another article - Who’s in Charge? Disempowerment Patterns in Real-World LLM Usage - by Anthropic [2], risks of over‑reliance on AI were categorized and ranked by prevalence. The most frequent risks for influencing user judgment were:

- •Reality distortion: from strictly factual responses (None) to reinforcing false beliefs (Severe).

- •Value‑judgment distortion: from helping clarify user values (None) to outsourcing moral decisions entirely (Severe).

- •Action distortion: from assisting with tasks while the user stays in control (None) to delegating end‑to‑end decisions to the AI (Severe).

While not focused specifically on software engineering, these risks absolutely apply. Claude is used for software engineering about 50% of the time according to [1], making distorted decision‑making particularly impactful. In engineering contexts, this could manifest as:

- •false reassurance about code quality or performance,

- •architectural missteps that become costly later,

- •assumptions about requirements that the model shouldn’t be making,

- •growing frequency of auto‑approvals coupled with insufficient oversight.

All of these can quietly steer teams toward brittle systems.

The Posedio Way: AI-Assisted Development with Deliberate Guardrails

At Posedio, we embrace AI as a core part of our engineering workflow - but deliberately. Rather than letting AI autonomy expand unchecked, we’ve built a development process that captures AI’s productivity benefits while embedding human judgment at the moments where it matters most.

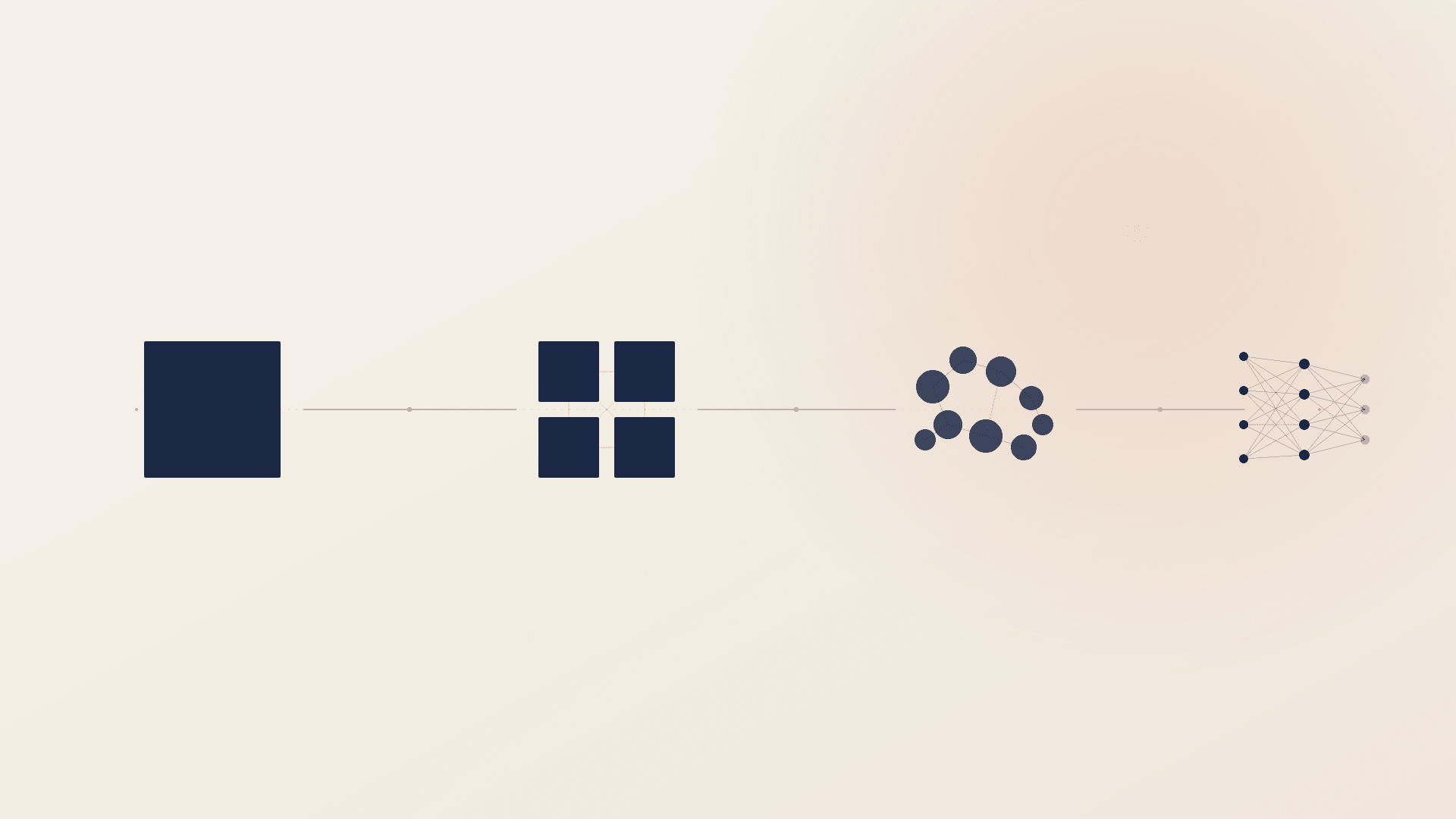

Since our founding, we’ve prioritized not just the act of writing code, but the design and operational excellence that determine whether a system succeeds long‑term. Software must be purposeful on Day 0 (design), robust on Day 1 (build), and dependable on Day 2 (operate). Here’s how that works in practice.

1. Design Before Code

Every significant effort starts with a Design Document - our first and most important guardrail. It forces early, explicit discussion around architecture, trade‑offs, and non‑functional requirements such as security, scalability, and observability.

AI is a tool here: helpful for exploring alternatives and validating assumptions. But the document must represent human intent. AI proposes; the team decides and owns the plan.

Human oversight remains the linchpin of robust software engineering. Designing a project and its architecture is not merely a technical exercise; it is a conceptual and contextual mapping of real-world problems into digital solutions. Outsourcing this contextualization step to a tool that relies on proper context-definition is bound to deliver poor results, and will inevitably lead to:

- •Degraded Maintainability: Developers struggle to understand the intent behind the code, making every update a risky endeavor.

- •Architectural Drift: The system becomes a "Big Ball of Mud," where boundaries blur and a change in one area causes unexpected failures in another.

- •Feature Stagnation: The disconnect between the domain and the implementation makes it increasingly difficult - and expensive - to iterate on new business requirements.

So make sure to Own Your Domain Before It Owns You.

2. Iterative Delivery with Real Oversight

We deliver in small increments, with strict automated gates:

- •static analysis

- •vulnerability scanning

- •license compliance

- •code coverage

- •policy‑as‑code checks

These aren’t suggestions, but hard stops.

Our code reviews go beyond logic checks. Each PR is also a checkpoint against architectural drift, the silent accumulation of small changes that reshape a system without conscious decision. Reviewers ask not only “Does this work?” but “Is this the right shape for the system?”

Code reviews are a time-consuming task, accounting for approximately 10–20% of developers' total time, as shown by Heander et al. [5]. While AI can drastically reduce the time required for reviews and potentially maintain high code quality, code reviews offer benefits beyond the code itself. Interpersonal advantages - such as knowledge transfer, shared code ownership, and team awareness - remain vital. Consequently, many experts argue for using AI-based systems to support human reviewers rather than replacing them [6][7][8].

At Posedio, we follow a clear rule: if an engineer cannot clearly explain their change, it does not get merged. Understanding is a fundamental merge criterion, regardless of the guardrails AI and tooling provide.

3. Operability from the Start

We ship code together with:

- •Comprehensive Documentation

- •Architecture Decision Records (ADRs)

- •OpenAPI definitions

Observability is designed upfront and validated in production. With deep SRE experience, we know what operational readiness looks like:

- •SLO‑driven alerting with useful metrics

- •meaningful alerting, not noise

- •runbooks aligned with real‑world behavior

Incidents loop back into the next design cycle, making each iteration a little more resilient.

4. Security as a Continuous Practice

Security isn’t a final gate - it’s a property baked in throughout the lifecycle. This is especially critical when AI accelerates code generation faster than humans can reflect on attack surfaces, data flows, or dependency risks. Treating security as a late‑stage checklist is a recipe for blind spots; treating it as continuous keeps the system trustworthy.

The combination of powerful AI tools and complex engineering systems demands a new kind of discipline. At Posedio, we pair AI‑driven efficiency with intentional human oversight, strong design foundations, and operational focus. There is no single rule that ensures success - it's the ecosystem of practices working together that ensures we ship software we understand, stand behind, and can maintain. By integrating insights from emerging research, we continue refining a process that is capable of scaling safely alongside the rapid evolution of AI.

Ownership in the Age of Autonomy

The shift toward AI-generated code isn't a reason to relax our standards - it’s a reason to sharpen them. As AI handles the "how" of syntax, the "why" of architecture becomes our most valuable asset. At Posedio, we believe that the true measure of a senior engineer in 2026 isn't how much code they can produce, but how much of that code they truly own.

AI has not lowered the barrier to building software - it has lowered the barrier to building software that nobody fully understands. The principles that software engineers have refined over decades still apply: someone needs to be able to read, debug, secure, and explain what was built. That hasn't changed. Only the pace has.

By pairing AI-driven efficiency with intentional human oversight, strong design foundations, and an uncompromising focus on quality, we aren't just shipping faster; we’re shipping software that lasts. AI is a powerful co-pilot, but at the end of the day, the responsibility for the destination - and the safety of the journey - remains firmly in human hands.

Sources

[1] Anthropic, "Measuring AI agent autonomy in practice", 2025. https://www.anthropic.com/research/measuring-agent-autonomy

[2] Anthropic, "Who's in Charge? Disempowerment Patterns in Real-World LLM Usage", arXiv, 2025. https://arxiv.org/abs/2601.19062

[3] Financial Times, "Amazon holds engineering meeting following AI-related outages", 2025. https://www.ft.com/content/7cab4ec7-4712-4137-b602-119a44f771de

[4] Aikido, "State of AI in Security & Development 2026", 2026. https://www.aikido.dev/state-of-ai-security-development-2026

[5] E. Heander et al., "Support, Not Automation: Towards AI-supported Code Review for Code Quality and Beyond", ACM, 2025. https://dl.acm.org/doi/10.1145/3696630.3728505

[6] Yonatha et al., "AICodeReview: Advancing Code Quality with AI-Enhanced Reviews", SSRN, 2024. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4575968

[7] M. Unterkalmsteiner et al., "Help Me to Understand this Commit! – A Vision for Contextualized Code Reviews", ACM, 2024. https://dl.acm.org/doi/10.1145/3643796.3648447

[8] Wang et al., "Unity Is Strength: Collaborative LLM-Based Agents for Code Reviewer Recommendation", ACM, 2024. https://dl.acm.org/doi/10.1145/3691620.3695291

[9] Financial Times, "McKinsey rushes to fix AI system after hacker exposes flaws", 2025. https://www.ft.com/content/004e785e-8e17-4cb3-8e5a-3c36190bc8b2